AIF Research Spotlight | Practical Face Reconstruction via Differentiable Ray Tracing

April 05, 2021

-News

Director of Research at AI Foundation, Gaurav Bharaj, recently published a new paper in the journal, Computer Graphics Forum, with Abdallah Dibb, et al. on how digital facial reconstruction can be done via their novel approach with ray tracing. The advancements from this new research will help further the visual aesthetics of future digital humans.

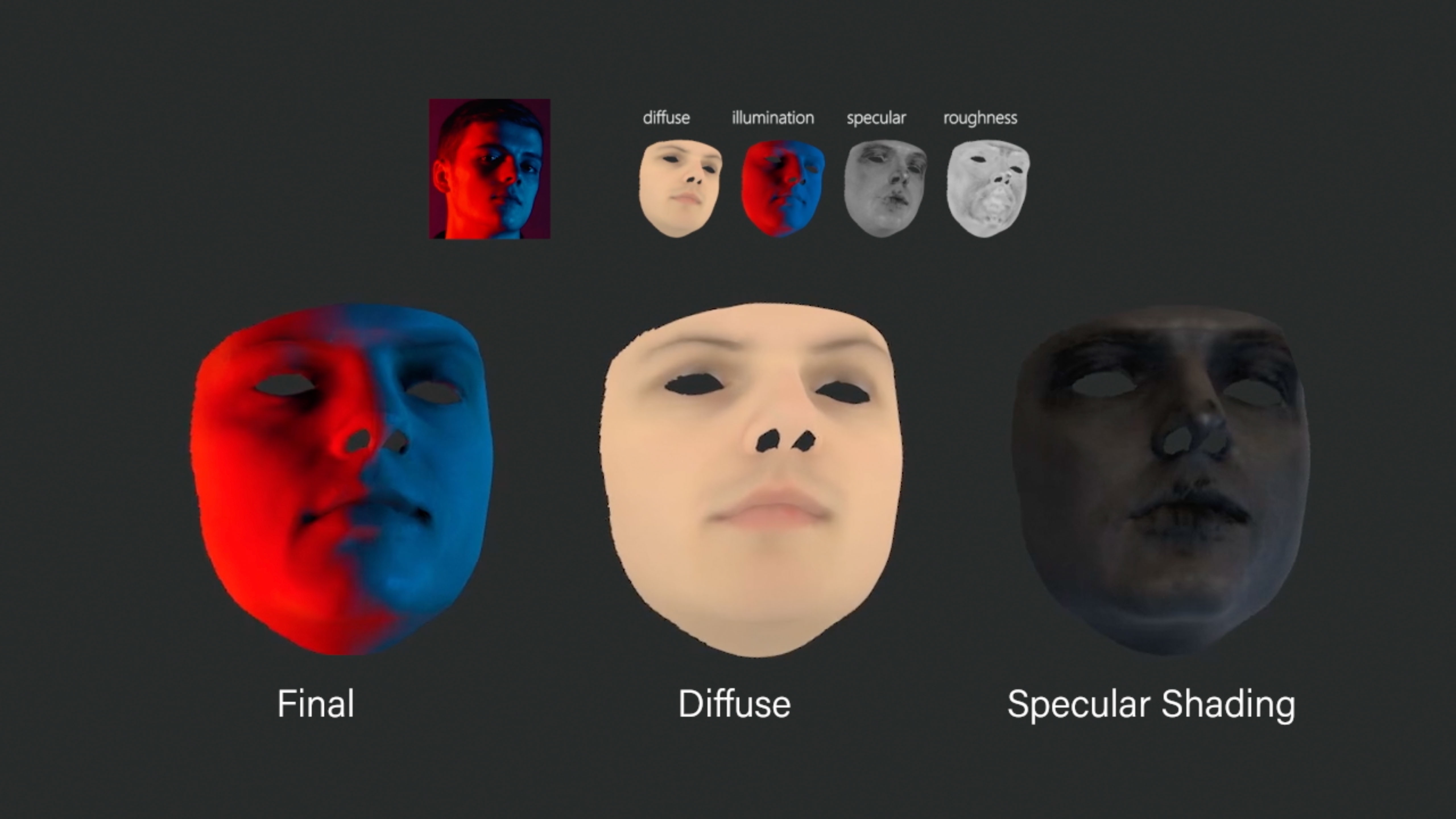

We present a differentiable ray-tracing-based novel face reconstruction approach where scene attributes – 3D geometry, reflectance (diffuse, specular, and roughness), pose, camera parameters, and scene illumination – are estimated from unconstrained monocular images. The proposed method models scene illumination via a novel, parameterized virtual light stage, which in conjunction with differentiable ray-tracing, introduces a coarse-to-fine optimization formulation for face reconstruction.

Our method can not only handle unconstrained illumination and self-shadows conditions but also estimates diffuse and specular albedos. To estimate the face attributes consistently and with practical semantics, a two-stage optimization strategy systematically uses a subset of parametric attributes, where subsequent attribute estimations factor those previously estimated. For example, self-shadows estimated during the first stage, later prevent its baking into the personalized diffuse and specular albedos in the second stage.

We show the efficacy of our approach in several real-world scenarios, where face attributes can be estimated even under extreme illumination conditions. Ablation studies, analyses, and comparisons against several recent state-of-the-art methods show improved accuracy and versatility of our approach. With consistent face attributes reconstruction, our method leads to several styles – illumination, albedo, self-shadow – edit and transfer applications, as discussed in the paper.

Ref: Practical Face Reconstruction via Differentiable Ray Tracing. Computer Graphics Forum (2021) | DOI: 10.1111/cgf.142622